The Future of Software

The world of software is undergoing a shift not seen since the advent of compilers in the 1970s. Compilers were the original vibe coding: they automatically generate complex machine code that human programmers had to manually write before. Over time, compilers became fully trusted, nobody has to look under the hood, most programmers won't understand a thing. Are AI coding agents the new compilers? Will we simply trust whatever code they generate?

In this post I focus on two questions:

- In what language(s) are we going to express our intent? How will humans tell AI agents what software artefacts we would like to create? Is English good enough?

- How we will come to trust code written by AI? Code generation is close to being automated, verification of what has been built is the current bottleneck. Inevitably, this verification bottleneck will fall. But how?

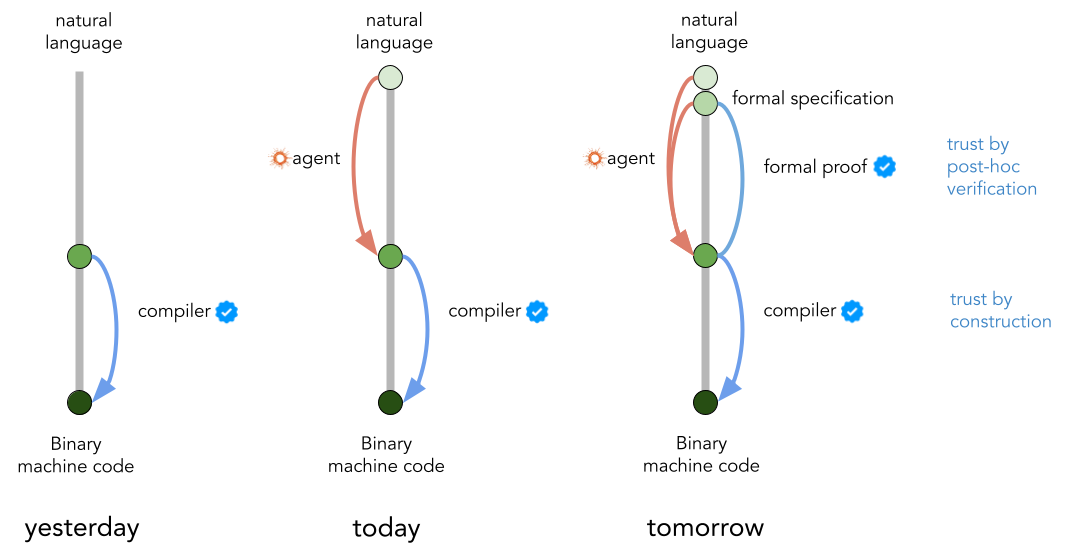

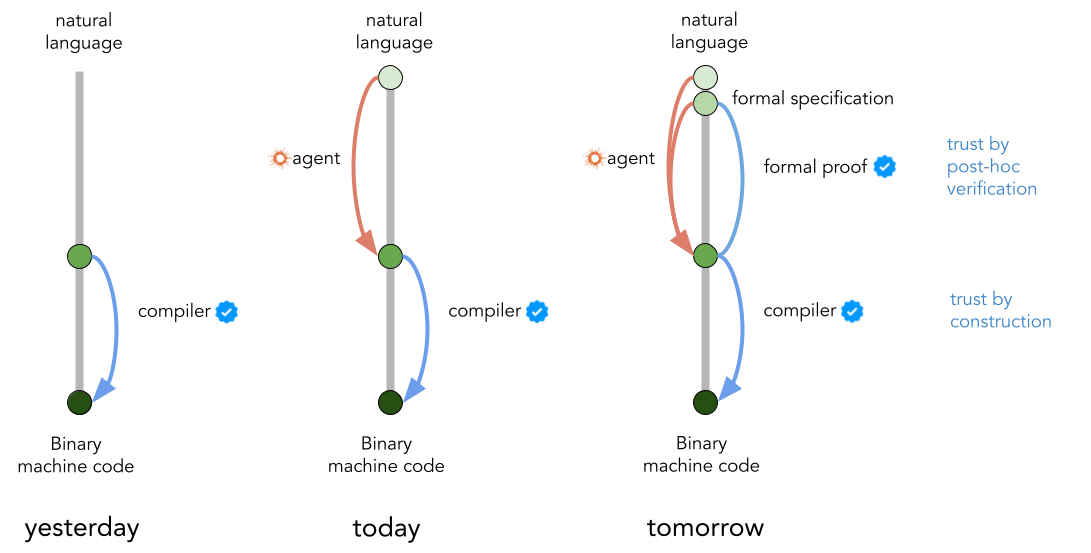

Here is a sneak peek of a likely future I envision. Read on for more detail:

Machine Code

I've recently read (or, rather, listened to) Bill Gates' memoir the Source Code. In it, Gates details his first foray into programming, and it quickly becomes blatantly clear that programming in those days meant something much, much more difficult.

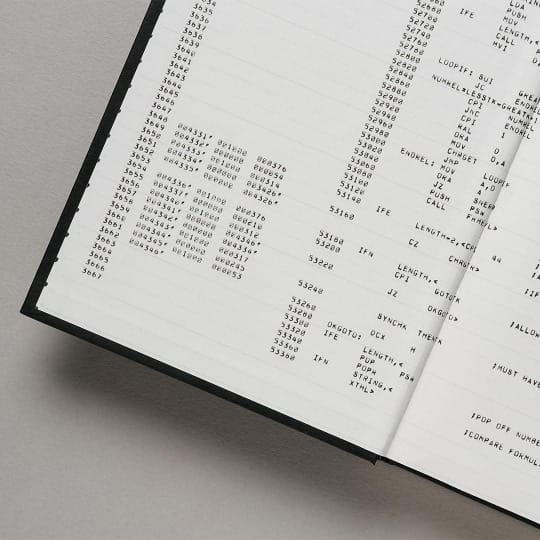

Gates started programming in assembly, a human-readable version of binary machine code. This is a very close to the metal instruction set, in which you manipulate key functions of the CPU very directly. Writing in this language is very cognitively demanding, and error-prone. Machine code completely lacks the ergonomy, safeguards, modularity offered by modern programming languages. Here is a program listing from the book as an example:

The Fate of Machine Code and Assembly

One of the first successful products that Microsoft sold was not an operating system, it was a compiler. The compiler allowed programmers to instruct the machines in BASIC, a high-level programming language co-developed by Hungarian-born John Kemény and Thomas Kurtz.

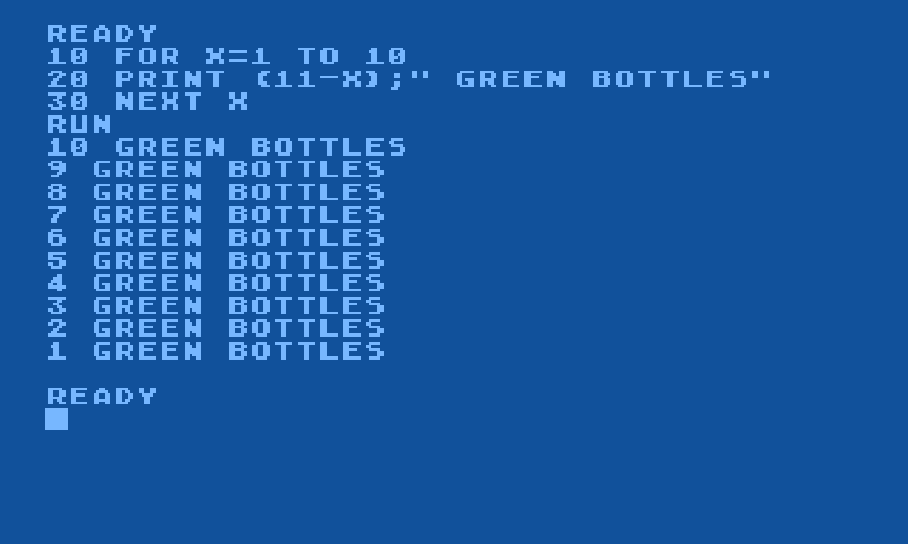

Below is a little three line example of a BASIC program, and its output:

This was a game changer! It massively democratised computer programming. This still required abstract thinking but far from the learning curve of assembly that was insurmountable for most people.

What happened to machine code? It is still there. It did not get replaced by BASIC, the BASIC compiler, or any other compiler for that matter, generates it under the hood. To people transitioning from Assembly to BASIC, this must have felt like vibe coding.

Machine code remained there, just nobody had to write or read it anymore. The compiler does all that, and we trust compilers to take care of it.

What fate awaits today's high level programming languages? Will they disappear with AI agents writing machine code directly, as Elon Musk predicts? I don't think so. Programming languages offer very useful safeguards and benefits beyond ergonomy. But it is likely they will be abstracted away, just like machine code is, with the need for human attention to this level gradually decreasing.

This future of software creation, in which our programming languages are abstracted away, raises two very important questions:

- What will the instruction/specification language look like? Assuming people will continue to want to control computers, in what language will we express our intention?

- How can we trust the layer below that specification language? We came to trust compilers almost entirely. Can we ever trust AI agents writing in high-level code to the same degree? What do we need to get there?

What language will we "program" in?

"The hottest new programming language is English" - Andrej Karpathy

Andrej may have been half-joking when he tweeted this a few years back, but it is now clear that there is at least some truth in that prediction even if it wasn't meant to be a serious prediction. In many applications of programming, especially in low-stakes prototypes, simple web apps or data science, we may have reached the point where specifying software behaviour in English is good enough. Attempts like CodeSpeak try to cultivate a serious version of this idea.

But English won't be a sufficient description language for all software, especially in higher-stakes situations where accountability matters. Natural language simply lacks precision and structure to describe things that really matter without ambiguity. It's sufficient to look at the amount of energy going into arguing about interpretations of legal text to understand why. Entire wars would have been prevented if the Bible got written in a strongly typed formal specification language instead of unclear natural language.

So in addition to English specifications and prompts, formal specification languages will also survive. These will have properties of programming languages (a clear grammar for syntax, unambiguous mapping for symbols to meaning) but will allow developers specify important high-level properties of a system compactly. Something like: "We are a bank, this is our ledger, money should not magically disappear from the ledger" but expressed with unambiguous mathematical clarity.

This formal specification for high-stakes software properties should be something a human developer or team can be held accountable for. This should be a clear layer of separation between the developer and the pool of AI agents working to maintain software. When something goes wrong, it should be unambiguous to determine whether a failure is a failure of specifying intent, or a failure of the AI to interpret the intent and satisfy the specification.

How Will we Trust AI Coding?

Once we have a high-level specification language, we have to get to a point where we can trust what happens "underneath". This trust is similar to how we trust compilers to generate machine code we don't even understand and never have to look at. It's actually amazing how much trust we have in compilers. Where does this trust come from?

Compilers are - mostly - deterministic, rules-based and modular programs. Developed bit by bit by humans, it's actually possible to understand what a compiler does, what components it has, what optimizations it performs, what it prioritises in decision making. It is interpretable, understandable, provided you have the time.

LLMs and LLM-based agents are famously not interpretable. It is a miracle they can produce meaningful source code at all. We don't know what sort of optimizations they do, or how they make decisions, and we can make no argument about correctness other than empirical evaluations. Anthropic doesn't say, because Anthropic likely doesn't know either. It's just something we have to live with. It would be nice if they were interpretable, but they aren't, and it will take decades before that fundamentally changes, if at all.

Our trust in AI agents will fundamentally have to come from a different source. Compilers are trusted by construction, trust in coding agents must instead rely on post-hoc reasoning about correctness. This is actually analogous to the "explainability by construction" vs "post-hoc explainability" shift in deep learning.

While we can't explain how an AI agent generates code, and we therefore cannot guarantee correctness at all times, we can reason about the correctness of the specific code once it is written. What we need then is a reliable way to check that the code generated actually fulfils the requirements in the formal specification. Something that needs no human involvement, and which can produce a "green checkmark" of correctness. Tools that allow us to do this with various levels of reliability are formal verification via formal reasoning, property-based testing, and runtime verification.

All of these are tools that had been somewhat niche, but it's clear that high-speed agentic software development is creating an urgent and obvious need for them. The good news is, superhuman LLMs and AI agents also make these tools more accessible in mainstream applications. Writing proofs in a formal reasoning system is a pretty hard, niche skill, but also something that LLMs continue to improve at rapidly each month.

My Summary in a Figure

To sum up the key points, I argued:

- For the most important business logic, English is insufficient. Formal specification languages will be used, so developers/organisations can be audited and held accountable for the software systems they create and maintain.

- Automated proof artefacts will be created that verify that the implementation agrees with the specification.

- The future of agentic software engineering is a multi-agent one, while some agents maintain/improve the implementation, other agents work to update the proof artefacts to maintain reliability and accountability.

Hopefully, with these arguments, the figure of the day makes sense. Compilers were trusted by construction (they are interpretable programs), AI agents (uninterpretable black boxes) will be trusted through post-hoc verification.

And, no surprises: this is precisely what we're working on at our new company Reasonable. If building this future sounds exciting, and you want to contribute, feel free to reach out or check out our open roles and internships.